Daniel Rothschild

The recent advances in AI are powered by learning algorithms that allow computers to develop abilities through training on data. These advances provide an unprecedented opportunity to study learning from a new angle: we now have powerful learning systems whose internals we can inspect, whose training we can control, and whose performance we can measure precisely. This gives us new traction on foundational questions about the nature of learning that have long resisted resolution.

This module uses machine learning as a window into those questions. It covers the foundations of machine learning from a theoretical and conceptual perspective, develops a taxonomy of modern ML paradigms, and asks what the remarkable recent successes of AI tell us about how learning works — in machines and, more cautiously, in biological minds.

Classes will mostly be on Tuesdays from 1-4pm in the seminar room, 19 Gordon Square, 101. We will always have a 20-30 minute break.

The module will be assessed by a 3500-word essay.

All unlinked readings available here (ask instructor for password).

All staff and students are welcome at any sessions.

This class will cover quite a few topics in machine learning and some on human learning. If you want to read or listen to a semi-popular book that covers a lot of recent background, I highly recommend Tom Griffiths’ new book, Laws of Thought, which will give you useful background on symbolic AI, neural networks, language acquisition, and Bayesianism.

Another useful resource are various online courses on machine learning, such as Andrew Ng’s machine learning course, the classic version of which is on youtube. There are many online courses; the practical programming side will not be useful for this course, but the basic theory will be.

28 APRIL: LEARNING AS SEARCH

Learning, across all its forms, can be understood as search through a space of possible systems guided by experience — a framework broad enough to encompass Bayesian updating, standard paradigms of machine learning, and human cognitive development.

- Plato, Meno (selections)

- Fodor, Language of Thought (selections)

- Margolis and Laurence, selections

- Newell and Simon, “Computer Science as Empirical Inquiry: Symbols and Search” (1976)

- Easwaran, Bayesianism, Philosophy Compass

- Dehaene, How We Learn (chapter)

Optional: Leibniz, New Essays (selections); Rothschild, “The Scope of Bayesianism”

5 MAY: LEARNING AS A COMPUTATIONAL PROCESS

The Church-Turing thesis and the theory of computation give precise content to the idea of learning as search, but the key insight is that universality is nearly vacuous as a constraint — what matters is efficiency and inductive bias, and this session introduces neural networks and backpropagation as the answer that has actually worked.

- Turing, “Computing Machinery and Intelligence” (1950)

- Copeland, “The Church-Turing Thesis,” Stanford Encyclopedia of Philosophy

- Aaronson, “Why Philosophers Should Care About Computational Complexity” (2013)

- Hinton, “How Neural Networks Learn from Experience”

Background: Valiant, Probably Approximately Correct; Valiant, PAC learning paper

12 MAY: A TAXONOMY OF MACHINE LEARNING

Gradient descent over parameterized functions is the unifying engine behind the apparent diversity of modern AI successes — language models, image generation, game play — and this session develops a taxonomy of machine learning paradigms that reveals the underlying unity, with supervised learning as the central and most powerful paradigm.

- Rothschild, “The New Modest Associationism: Lessons from Deep Learning”

- LeCun, Bengio and Hinton, “Deep Learning,” Nature (2015)

- McClelland, Rumelhart and Hinton, “The Appeal of Parallel Distributed Processing” (1986, selections)

Optional: Smolensky, “On the Proper Treatment of Connectionism” (1986)

19 MAY: REINFORCEMENT LEARNING AND MOTIVATION

Reinforcement learning is introduced technically — temporal difference learning, value functions, Deep Q-learning — before the session pivots to ask what the reward signal actually is for human learners, whether understanding itself can be intrinsically rewarding, and what kind of values are coherent enough to specify an objective function at all.

- Sutton and Barto, Reinforcement Learning: An Introduction, Chapter 1

- Gopnik, “Explanation as Orgasm” (1998)

- Christian, The Alignment Problem (selections)

2 JUNE: LLMS: ASSOCIATIONISM AND FAST INFERENCE

The taxonomy from previous sessions might suggest that the dominant learning mechanism in modern AI is essentially associationist — gradual, error-driven, domain-general — and this session asks how slow associationist training produces systems capable of fast, flexible, apparently reasoning-like behavior at inference time, with language emerging as the key to the answer.

- Mandelbaum and Millière, “Associationist Theories of Thought,” Stanford Encyclopedia of Philosophy (2025)

- Mahowald et al., “Dissociating Language and Thought in Large Language Models,” Trends in Cognitive Sciences (2024)

- Bubeck et al., “Sparks of Artificial General Intelligence” (2023, selections)

4 JUNE 1-3pm: STUDENT PRESENTATIONS

9 JUNE: LANGUAGE AND LEARNING

Only AI systems trained extensively on natural language exhibit powerful domain-general reasoning, and this session argues that the explanation lies in language’s properties as a compression system — making general inference computationally tractable — with implications for the longstanding debate about the role of language in human thought.

- Rothschild, “Language and Thought: The View from LLMs”

- Lupyan and Bergen, “How Language Programs the Mind” (2016)

Supplementary: Fedorenko et al., “Language is Primarily a Tool for Communication Rather than Thought” (2024); Griffiths et al., “Whither Symbols in the Era of Advanced Neural Networks?”

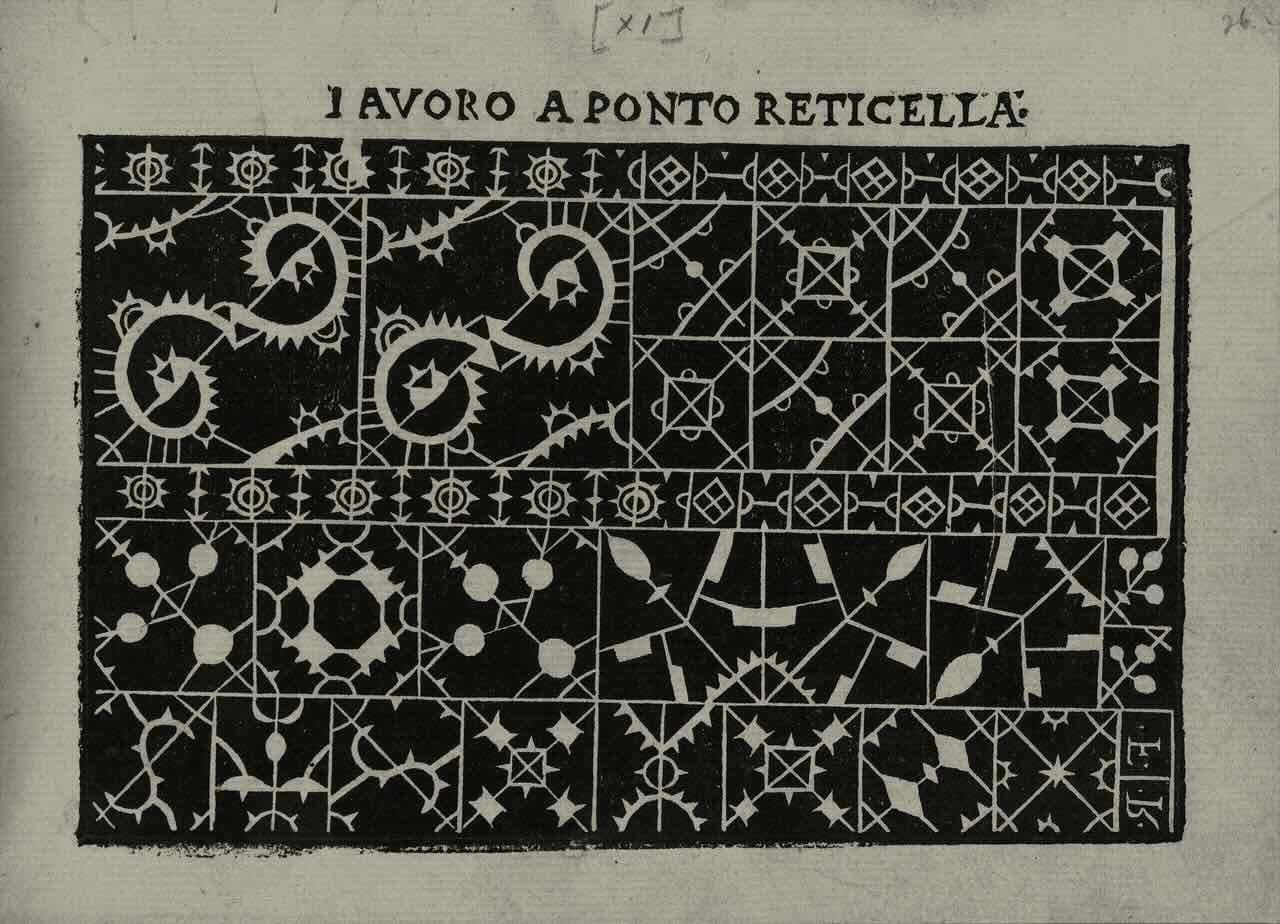

Image: Lace pattern woodcut by Isabella Catanea Parasole, 1600